Back

Similar todos

Run openllm dollyv2 on local linux server

Starting up local server #olisto

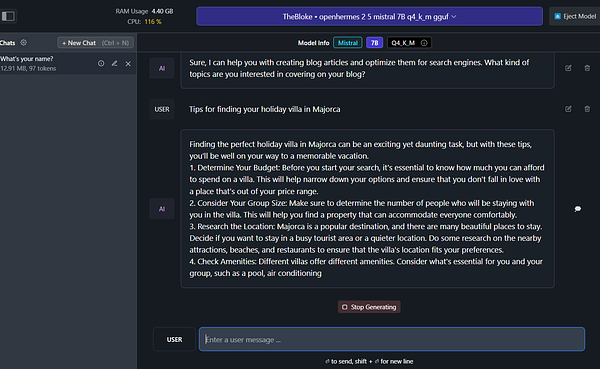

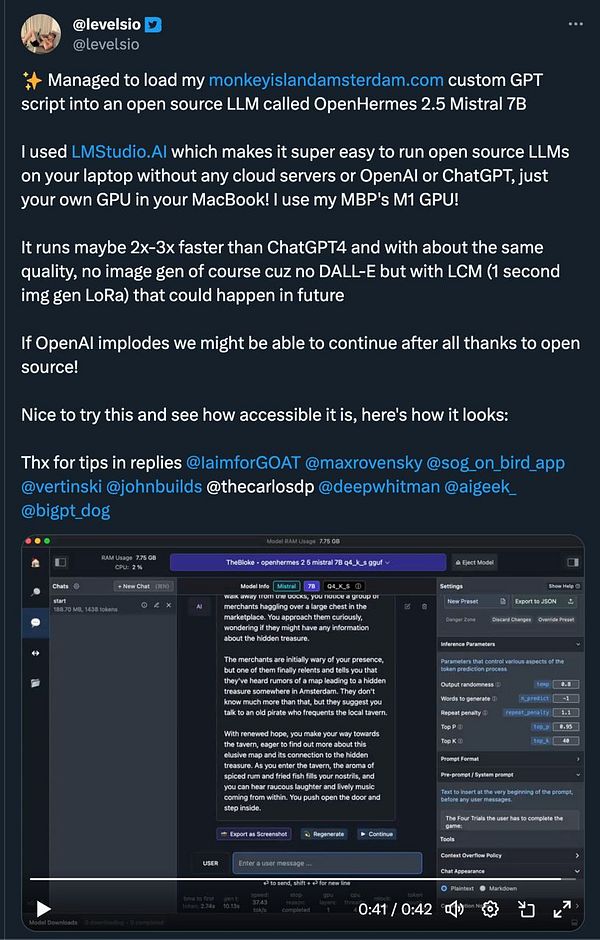

try load  #monkeyisland in my own local LLM

#monkeyisland in my own local LLM

get the app running locally #rikko

get AIE app run locally so I can dev the API  #aiplay

#aiplay

try client side web based Llama 3 in JS  #life webllm.mlc.ai/

#life webllm.mlc.ai/

prototype a simple autocomplete using local llama2 via Ollama  #aiplay

#aiplay

📝 prototyped an llm-ollama plugin tonight. models list and it talks to the right places. prompts need more work.

Set up local database #workalo

Over the past three days and a half, I've dedicated all my time to developing a new product that leverages locally running Llama 3.1 for real-time AI responses.

It's now available on Macs with M series chips – completely free, local, and incredibly fast.

Get it: Snapbox.app  #snapbox

#snapbox

Ran some local LLM tests 🤖

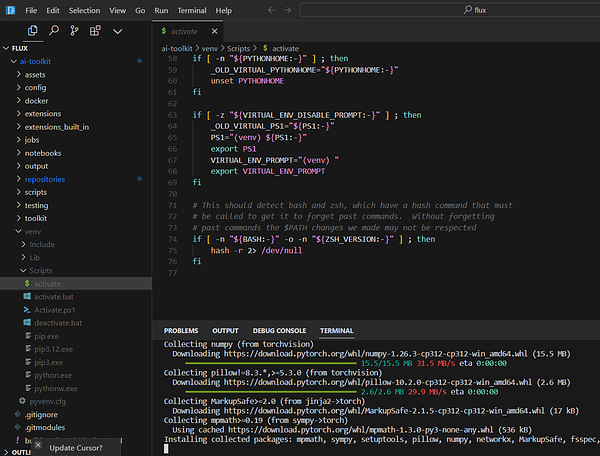

work on setting up the system locally #labs

Get the app started  #paperclip

#paperclip

✏️ wrote about running Llama 3.1 locally through Ollama on my Mac Studio. micro.webology.dev/2024/07/24…

setup project on local #lite