Back

Similar todos

check out Llama 3.1  #life

#life

PR to LlamaIndex merged. OpenAPI Spec loader :)

wrote a guide on llama 3.2  #getdeploying

#getdeploying

use llama3 70b to create transcript summary  #spectropic

#spectropic

switch  #therapistai to Llama 3.1

#therapistai to Llama 3.1

tested llama model to scan newsletters  #sponsorgap

#sponsorgap

try client side web based Llama 3 in JS  #life webllm.mlc.ai/

#life webllm.mlc.ai/

prototype a simple autocomplete using local llama2 via Ollama  #aiplay

#aiplay

read llama guard paper  #aiplay

#aiplay

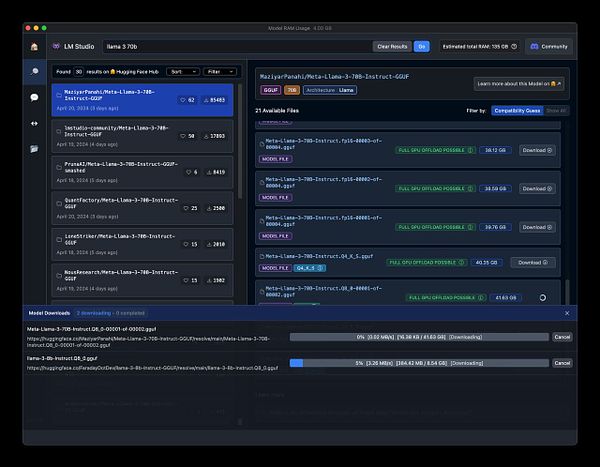

✏️ wrote about running Llama 3.1 locally through Ollama on my Mac Studio. micro.webology.dev/2024/07/24…

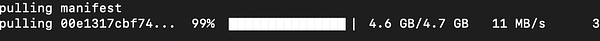

Playing with llama2 locally and running it from the first time on my machine

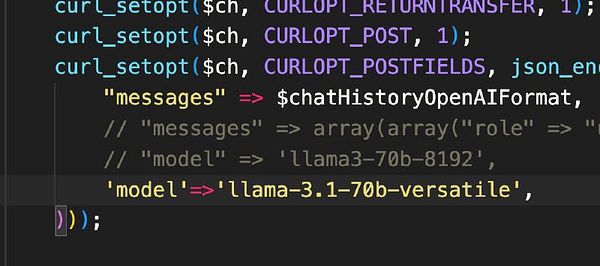

got llama3 on groq working with cursor 🤯

🤖 Tried out Llama 3.3 and the latest Ollama client for what feels like flawless local tool calling.  #research

#research

test  #therapistai with Llama 3-70B

#therapistai with Llama 3-70B

🤖 Updated some scripts to use Ollama's latest structured output with Llama 3.3 (latest) and fell back to Llama 3.2. I drop from >1 minute with 3.3 down to 2 to 7 seconds per request with 3.2. I can't see a difference in the results. For small projects 3.2 is the better path.  #research

#research