Back

Similar todos

🤖 played with Aider and it mostly working with Ollama + Llama 3.1  #research

#research

🤖 Tried out Llama 3.3 and the latest Ollama client for what feels like flawless local tool calling.  #research

#research

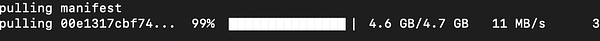

🤖 got llama-cpp running locally 🐍

🤖 played around with adding extra context in some local Ollama models. Trying to test out some real-world tasks I'm tired of doing.  #research

#research

✏️ wrote about running Llama 3.1 locally through Ollama on my Mac Studio. micro.webology.dev/2024/07/24…

🤖 played with Ollama's tool calling with Llama 3.2 to create a calendar management agent demo  #research

#research

✏️ I wrote and published my notes on using the Ollama service micro.webology.dev/2024/06/11…

🤖 spent some time getting Ollama and langchain to work together. I hooked up tooling/function calling and noticed that I was only getting a match on the first function call. Kind of neat but kid of a pain.

🤖 spent my evening writing a better console for some more advanced Ollama 3.1 projects.  #research

#research

More Ollama experimenting  #research

#research

Run openllm dollyv2 on local linux server

🦙 added ollama and settings to my  #dotfiles since it works so well

#dotfiles since it works so well

Just finished and published a web interface for Ollama  #ollamachat

#ollamachat

🤖 Created an Ollama + Llama 3.2 version of my job parser to compare to ChatGPT. It's not bad at all, but not as good as GPT4.  #jobs

#jobs

🤖 Updated some scripts to use Ollama's latest structured output with Llama 3.3 (latest) and fell back to Llama 3.2. I drop from >1 minute with 3.3 down to 2 to 7 seconds per request with 3.2. I can't see a difference in the results. For small projects 3.2 is the better path.  #research

#research

🤖 more working with Ollama and Llama 3.1 and working on a story writer as a good enough demo.  #research

#research

🤖 moved standup bot to docker  #bots

#bots